Six months ago, building an AI assistant meant wiring a chatbot to the OpenAI API and hoping users would not notice when it forgot their name between sessions. That era is over. The open source AI agent frameworks that launched in early 2026 do not just respond to prompts. They remember across sessions, create their own reusable skills from experience, run on a schedule without being asked, and execute real work on your machine while you sleep.

Two frameworks dominate the conversation right now. Hermes Agent from Nous Research has crossed 100,000 GitHub stars since its February launch by betting everything on a self-improving architecture where the agent literally writes its own instruction files. OpenClaw, the fastest growing open source repository in GitHub history, took a different path: maximum reach across every messaging platform imaginable, a massive community skill marketplace, and a philosophy of "your personal AI assistant, running locally." Behind both of them, a newer project called Paperclip is pushing the concept even further by treating AI agents not as tools but as employees in a fully autonomous company structure.

This post breaks down the architectures, the trade offs, and the security realities of each framework so you can decide which one actually fits your use case instead of chasing star counts.

The Shift Nobody Saw Coming

The old model of AI automation was stateless by design. You sent a prompt, got a response, and the next session started from zero. Every context window was a blank slate. That constraint shaped an entire generation of products: chatbot widgets, single turn assistants, Q&A bots bolted onto help desks. Useful, but fundamentally limited to tasks a human could describe completely in a single message.

What changed in 2026 is persistence. The new generation of agent frameworks maintain memory across sessions, accumulate knowledge over time, and take autonomous action on schedules without waiting for a human to type something. The technical foundations (vector databases, long context windows, tool calling APIs) existed in 2025, but nobody had packaged them into a framework that a solo developer could deploy on a $10 VPS and have running inside an afternoon.

Hermes Agent and OpenClaw both solved that packaging problem, but they made fundamentally different bets about what matters most.

Hermes Agent: The Agent That Writes Its Own Playbook

Hermes Agent launched on February 25, 2026 and hit 95,000 GitHub stars in seven weeks. It is built by Nous Research, the lab behind the Hermes and Psyche model families, and released under the MIT license. The current version ships with 47 built in tools, six terminal backends, and native support for 18 messaging platforms from a single gateway deployment.

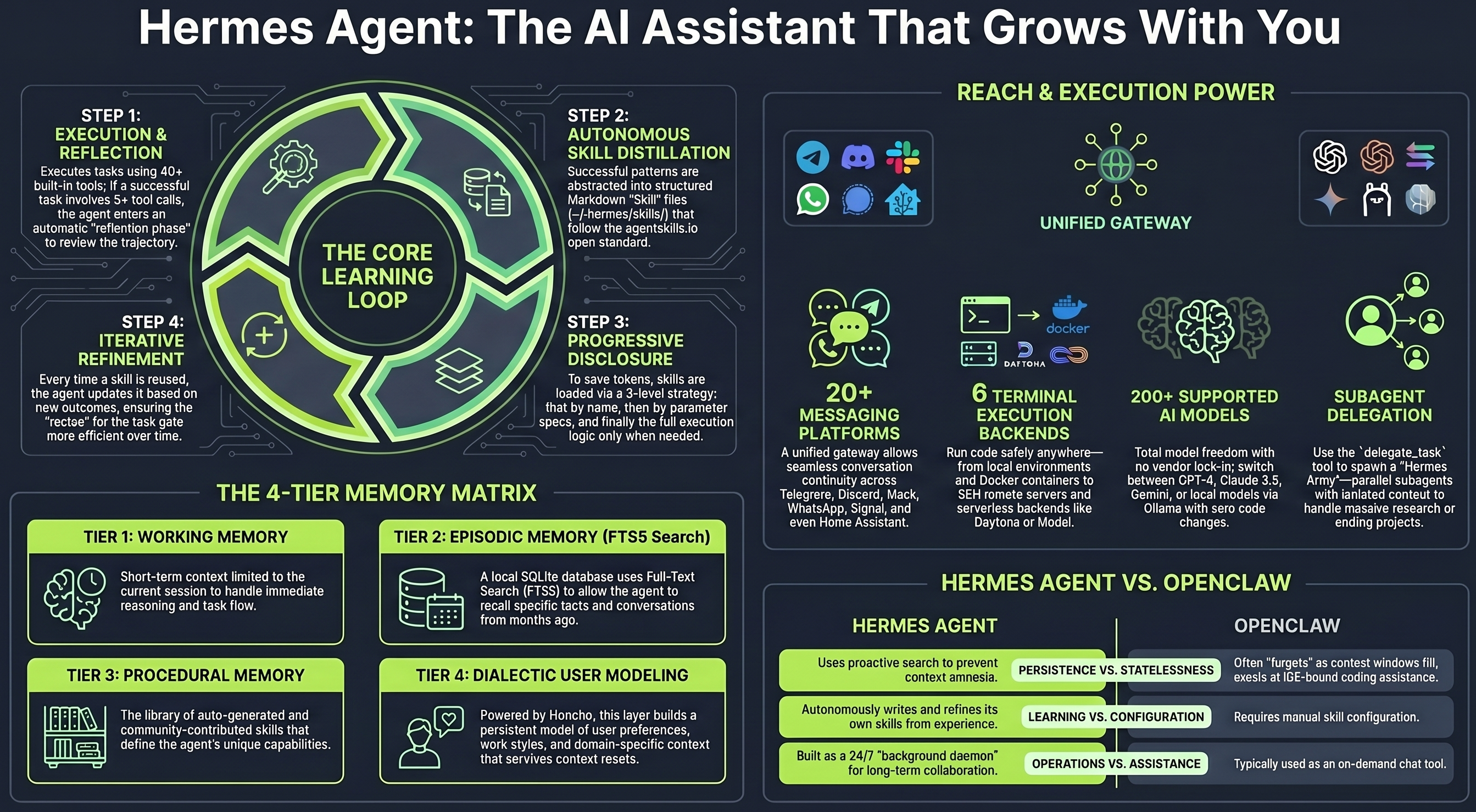

The headline feature is the closed learning loop. After completing a complex task (typically one involving five or more tool calls), Hermes evaluates what it did, identifies reusable patterns, and writes a Markdown skill file that it stores locally. The next time it encounters a similar problem, it loads the relevant skill and solves it faster without the original prompt. These skill files are human readable, editable, and portable. You can inspect exactly what the agent learned, modify it, or share it with the community through the agentskills.io registry.

This is not a marketing claim dressed up in buzzwords. The skill files sit on your disk as plain text. You can open them, read them, and verify whether the agent actually learned something useful or wrote garbage. Independent benchmarks from TokenMix showed that self created skills reduced research task completion time by roughly 40 percent compared to a fresh agent instance with no accumulated skills.

Memory Architecture

Hermes uses a three layer memory system. The first layer is an FTS5 full text search index built on SQLite that stores every past session and allows the agent to retrieve context from any previous conversation using natural language queries. The second layer is Honcho, a dialectic user modeling system that builds and continuously updates a persistent model of who you are, how you work, and what you prefer across all sessions. The third layer is the skill library itself, which functions as procedural memory: reusable workflows the agent has extracted from its own experience.

The practical effect is that Hermes remembers not just what you talked about last Tuesday but how you like things done. Ask it to draft a client email three months from now and it will use the tone, structure, and formatting patterns it observed in your earlier sessions without you having to explain your preferences again.

Deployment Flexibility

Hermes runs on six different execution backends: local shell, Docker containers, SSH into remote machines, Daytona workspaces, Singularity containers, and Modal for serverless. The serverless option is particularly interesting because the agent hibernates when idle and only spins up when a scheduled task fires or a message arrives, keeping costs near zero during quiet periods.

You can run a single Hermes deployment and interact with it simultaneously across Telegram, Discord, Slack, WhatsApp, Signal, Matrix, email, SMS, and several other platforms. One install, one memory, one growing skill library, accessible from wherever you already communicate. The gateway handles all platform translation so the agent sees a unified conversation regardless of which channel a message arrived on.

For a solo operator or small team, the realistic monthly cost is the VPS hosting (as low as $5 to $10 on providers like Hostinger or Hetzner) plus LLM API costs, which run roughly $0.30 per complex task on budget models through OpenRouter. No subscription. No per seat pricing. No vendor lock in because Hermes supports any OpenAI compatible provider including Anthropic, Google, and open source models.

OpenClaw: Scale, Community, and a Security Wake Up Call

OpenClaw started as a weekend project by Austrian developer Peter Steinberger in November 2025 under the name Clawdbot. A lobster themed personal AI assistant that ran locally and talked to you through WhatsApp or Telegram. A Hacker News post in late January 2026 sent it viral. Within 24 hours it had 20,000 stars. Within 60 days it had surpassed React's decade long record. By April 2026, the repository had crossed 300,000 GitHub stars and accumulated over 3 million users.

The speed of adoption is hard to overstate. OpenClaw became the default "first agent" for an enormous number of developers and non technical users who wanted an always on AI assistant running on their own hardware. The architecture prioritizes breadth: 20 plus messaging platform integrations, a massive community built skill marketplace called ClawHub with thousands of contributed skills, local first memory stored as Markdown files on your own disk, and a heartbeat scheduler that wakes the agent on a configurable cadence to check for tasks.

The community ecosystem is OpenClaw's greatest strength. Need your agent to monitor GitHub issues? There is a ClawHub skill for that. Want it to triage your inbox every morning? Skill for that. Automate LinkedIn outreach, parse invoices, negotiate with vendors over email? All community contributed, all installable in seconds.

The Security Crisis

OpenClaw's greatest strength also became its greatest vulnerability. On January 30, 2026, security researcher Mav Levin disclosed CVE-2026-25253, a cross site WebSocket hijacking vulnerability with a CVSS score of 8.8. The flaw allowed one click remote code execution: clicking a crafted link sent the user's authentication token to an attacker controlled server, giving full RCE on the victim's machine. Scans revealed over 135,000 publicly exposed OpenClaw instances at the time of disclosure.

The marketplace was hit even harder. Bitdefender's audit found over 824 malicious skills on ClawHub, roughly 20 percent of the entire registry at the time of the scan. A malware campaign dubbed ClawHavoc distributed infostealers through skills that looked legitimate. Snyk's ToxicSkills audit confirmed 76 malicious payloads in a separate scan of nearly 4,000 skills.

In a four day period, nine additional CVEs were disclosed, eight of them rated critical. Microsoft's Defender team published guidance on running OpenClaw safely. NVIDIA announced NemoClaw at GTC 2026, an open source security layer that adds kernel level sandboxing to OpenClaw deployments. The OpenClaw team responded quickly with patches, mandatory authentication tokens, and a new skill vetting process, but the damage to trust was real.

The core architectural issue is that OpenClaw gives an autonomous agent shell access, browser control, and the ability to send messages on your behalf on a loop, without asking each time. That is powerful. It is also an enormous attack surface when the skill marketplace and authentication layer are not locked down.

Hermes Agent, by comparison, defaults to containerized Docker execution and currently has zero agent specific CVEs reported as of May 2026. This is partly because it is newer and partly because its architecture isolates execution more aggressively by default.

Head to Head Comparison

The table below captures the key differences that actually matter when choosing between the two.

| Dimension | Hermes Agent | OpenClaw |

|---|---|---|

| Primary strength | Self improving via closed learning loop | Massive community skill ecosystem |

| GitHub stars (May 2026) | ~101,000 | ~300,000+ |

| Launch date | February 25, 2026 | November 2025 (as Clawdbot) |

| License | MIT | MIT |

| Memory model | FTS5 + Honcho dialectic modeling + procedural skill library | Markdown files on disk with file compaction |

| Learning capability | Creates and refines skill files from experience | No internal learning loop; relies on community skills |

| Messaging platforms | 18 from single gateway | 20+ from single gateway |

| Execution backends | 6 (local, Docker, SSH, Daytona, Singularity, Modal) | Local, Docker |

| Security posture (May 2026) | Zero agent specific CVEs, containerized by default | CVE-2026-25253 (CVSS 8.8), 9 CVEs in 4 days, ClawHub marketplace compromised |

| Skill ecosystem | Growing (agentskills.io), agent creates its own | Massive (ClawHub), community contributed |

| Best for | Teams that need an agent that compounds in capability over time | Users who want breadth of integrations and a huge template library immediately |

| Realistic monthly cost | $5 to $15 VPS + API costs | $5 to $15 VPS + API costs |

Neither framework is universally better. OpenClaw gets you to a working agent faster because someone has probably already built the skill you need. Hermes gets you to a better agent over time because it learns from your specific workflows and accumulates institutional knowledge that a community skill cannot replicate.

Many teams are now running hybrid deployments: OpenClaw as the customer facing gateway handling high volume, lower complexity interactions, with Hermes handling recurring backend tasks where the self improvement loop compounds into real efficiency gains over weeks and months.

Paperclip and the Zero Human Company

If Hermes Agent and OpenClaw are individual employees, Paperclip is the company they work for. Launched in March 2026, Paperclip crossed 60,000 GitHub stars within its first month and sits at roughly 64,000 as of May 2026. It is built as a Node.js server with a React dashboard and released under the MIT license.

Paperclip does not build agents. It takes existing agents (Hermes, OpenClaw, Claude Code, Codex, custom scripts, anything that can receive an HTTP webhook) and orchestrates them into a functioning organizational structure. You define a CEO agent, a CTO agent, marketing agents, developer agents, and QA agents. Each has a role defined in Markdown, a reporting line, a task inbox, and a monthly token budget. Goals flow top down from the company mission through department heads to individual workers.

The execution model is called the heartbeat. Agents do not run continuously. They wake up on a scheduled cadence (every 15 minutes, every hour, whatever you configure), check their task inbox, execute assigned work, log the results, and go back to sleep. This keeps token costs manageable because agents are not burning API calls while sitting idle.

What It Actually Looks Like

A typical Paperclip setup for a solo founder might look like this. The CEO agent reviews strategy and delegates work every morning. A content marketing agent writes and schedules social media posts on a daily heartbeat. A developer agent picks up GitHub issues, writes code, and opens pull requests. A QA agent reviews those pull requests against a checklist. A research agent monitors competitor activity and writes weekly briefing documents.

Each agent's work traces back to the company's top level goal through a chain of parent tasks. If a task cannot be justified by the company mission, it should not exist. Budget controls automatically pause an agent that hits its monthly token cap or encounters consecutive failures, preventing the runaway cost spirals that plagued earlier multi agent experiments.

Paperclip also ships pre built company templates that you can import with one click. A security auditing firm template, a creative strategy agency, an e-commerce operation. You download the template, customize the roles and goals, connect your LLM credentials, and the "company" starts operating.

The Reality Check

The zero human company concept is genuinely interesting but still experimental. No documented examples of businesses running entirely on Paperclip without human oversight exist yet. The code agents work well because code has clear success criteria (tests pass or they do not). Marketing and strategy agents are less reliable because quality is subjective and models still hallucinate confidently. Running multiple agents also burns through API tokens fast. Claude Code Max at $200 a month is practically required for serious multi agent setups.

What Paperclip does prove is that the orchestration layer for multi agent systems was the missing piece. Individual agents are powerful. Coordinating them so they do not duplicate work, blow through budgets, or lose context between sessions is the hard problem, and Paperclip is the first open source framework to offer org charts, governance, audit trails, and budget enforcement in a single package.

What This Means for Businesses That Automate

The emergence of self improving agents and multi agent orchestration frameworks does not replace the workflow automation layer. It sits on top of it. Hermes Agent still needs to trigger actions in your CRM, send messages through your communication channels, update your databases, and move data between the tools your business actually runs on. The agent decides what to do. The automation infrastructure does it.

This is where platforms like n8n become the execution backbone. An AI agent can determine that a patient needs a follow up appointment reminder, but it is the n8n workflow that actually sends the WhatsApp message, updates the calendar, and logs the interaction. The agent handles judgment. The workflow handles plumbing. Neither replaces the other.

For businesses already running automation, the practical next step is not to tear everything out and replace it with an agent framework. It is to identify the decision points in your existing workflows where a human currently makes a judgment call and evaluate whether an agent can handle that decision reliably. Triage incoming support tickets by urgency. Decide whether a lead qualifies for outreach. Choose which follow up sequence to trigger based on patient history. These are the integration points where agents add real value without requiring you to rebuild your entire stack.

The Bottom Line

The open source AI agent landscape in 2026 has split into three distinct layers. Hermes Agent owns the self improvement layer, building agents that genuinely get better with use and maintain deep cross session memory. OpenClaw owns the reach and community layer, offering the fastest path to a working agent with the broadest ecosystem of pre built skills, though the security track record demands careful deployment practices. Paperclip owns the orchestration layer, providing the organizational structure that turns a collection of individual agents into a coordinated workforce.

All three are MIT licensed. All three can run on your own infrastructure for under $20 a month in hosting. None of them require you to pick just one because they are designed to work together.

The businesses that will benefit most from these frameworks in 2026 are not the ones chasing the flashiest agent demo. They are the ones that already have a solid automation foundation and are ready to add an intelligence layer on top of it. If that sounds like where you are headed, book a free automation audit and we will map where an agent integration would create the most value in your current setup.